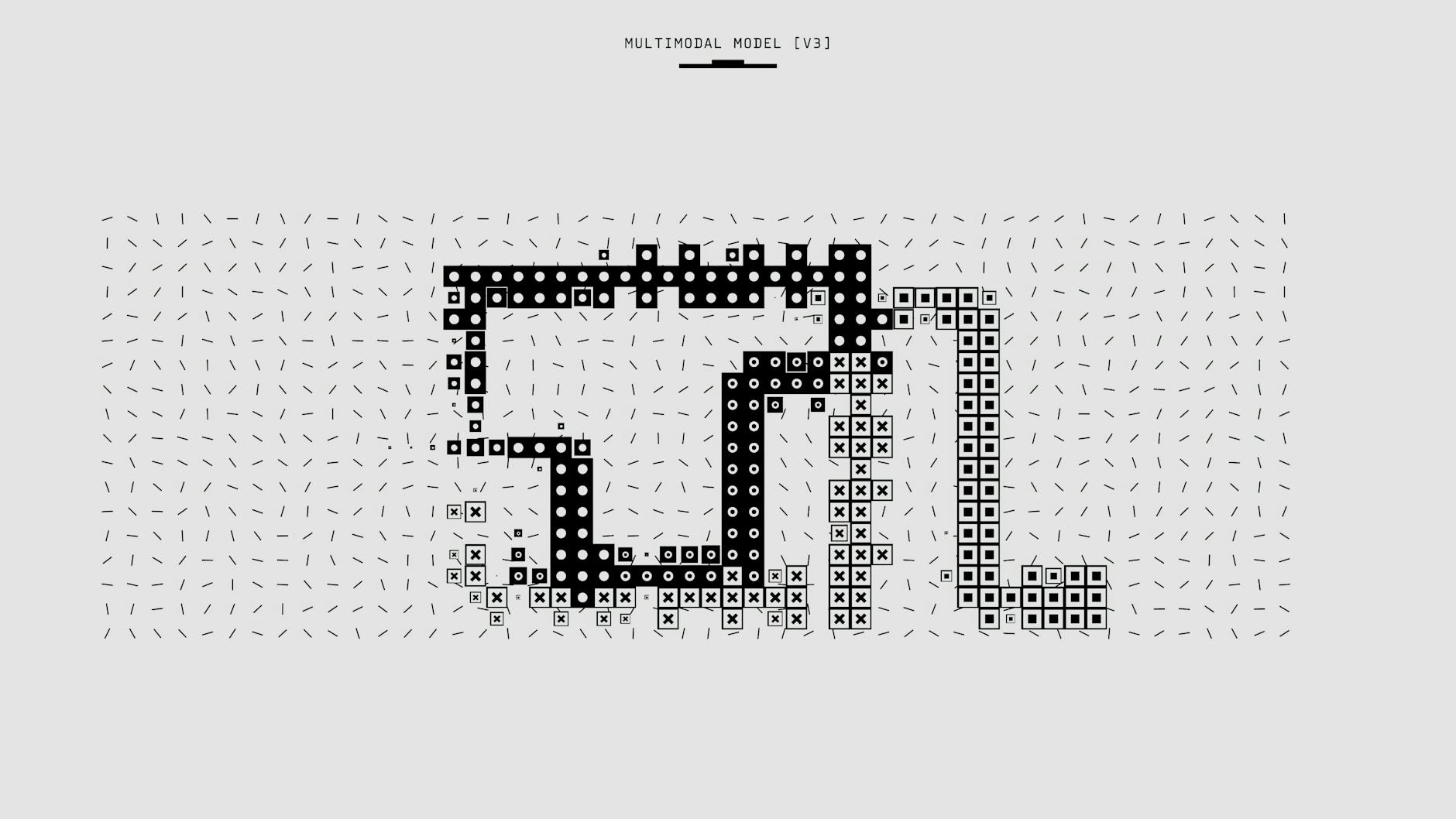

Understanding MetaEmbed: How Flexible Late Interaction Enables Scalable Multimodal Retrieval at Test Time

MetaEmbed is a novel approach to multimodal-large-language-models-key-takeaways-and-implications/”>multimodal-models-key-insights-from-the-mmtok-study/”>multimodal retrieval that offers significant advantages in scalability and flexibility. It decouples modality encoders from the final cross-modal scorer, enabling late test-time interaction without retraining. This key innovation allows for the combination of vision, language, and other modalities after initial retrieval, facilitating the rapid inclusion of new data sources.

Key Features of MetaEmbed

- Two-stage retrieval: A fast first stage generates candidate results using lightweight signals, followed by a more precise second stage that cross-modally re-ranks the candidates.

- Scalable architecture: By limiting computationally expensive cross-modal scoring to a smaller candidate set, MetaEmbed maintains low latency even with massive archives.

- Flexible late interaction: Enables combining different modalities (vision, language, etc.) at the scoring stage, allowing adaptation to new data sources without model retraining. This is achieved through a dedicated late-interaction scorer.

This two-stage approach is inspired by prior research, such as the ECIR 2011 paper “Dynamic Two-Stage Image Retrieval from Large Multimodal Databases” (citation needed), which demonstrated the effectiveness of separating indexing and ranking for scalable multimodal search.

How MetaEmbed Works

MetaEmbed employs independent encoders for each modality (vision, language, etc.), projecting their outputs into a shared cross-modal embedding space. This allows for comparisons using a single scoring mechanism across modalities, without the need to retrain the encoders when adding new modalities.

In essence, MetaEmbed treats different signals as pieces of a single searchable puzzle. Each modality has its own encoder, but these are projected into a shared space, allowing comparison with a single scoring mechanism. This contrasts with approaches that merge modalities early in the process, which tend to be less scalable and adaptable.

The late-interaction scorer plays a critical role. It allows flexible fusion strategies, including simple dot-product scoring, cross-attention, and learned bilinear pooling. This flexibility enables the system to adapt to new data and fusion requirements at query time.

Benefits of Flexible Late Interaction

The flexibility of MetaEmbed offers several key advantages:

- Scalability: Handles massive heterogeneous multimodal archives.

- Extensibility: Easily adds new modalities without extensive retraining.

- Improved retrieval quality: Leverages late fusion signals for enhanced accuracy.

MetaEmbed’s architecture contrasts with other methods such as GPT-4-Turbo and Claude Opus. While these models offer strong integrated reasoning, they may be less scalable and require retraining for new modalities. MetaEmbed provides a clear advantage in speed and scalability for large multimodal archives with evolving data sources. A detailed comparison is provided in a dedicated table below.

Evaluation and Benchmarks

A robust evaluation framework is crucial. This includes the use of diverse benchmark datasets (e.g., MS COCO, Flickr30k, Conceptual Captions) (citations needed for datasets’ properties and suitability) and the measurement of multiple metrics, such as Recall@K, mAP, NDCG, and latency. Comparisons against GPT-4-Turbo and Claude Opus in controlled settings are also essential.

Conclusion

MetaEmbed’s flexible late interaction approach represents a significant advancement in scalable multimodal retrieval. Its modular design, ability to adapt to new data without retraining, and data-driven evaluation framework make it a powerful tool for handling massive and evolving multimodal data sources.

| Feature | MetaEmbed | GPT-4-Turbo | Claude Opus |

|---|---|---|---|

| Modality Support | Multimodal (extendable) | Text & Image | Text & Image |

| Retrieval Paradigm | Dense embedding with indexing | Prompting, optional vector stores | Prompting, optional vector stores |

| Latency & Scalability | Low latency, high scalability | Higher latency | Higher latency |

| Accuracy | Strong for precise matching | Good, potentially better in complex queries | Good, potentially better in complex queries |

| Reasoning | Primarily retrieval-focused | High reasoning ability | High reasoning ability |

Leave a Reply