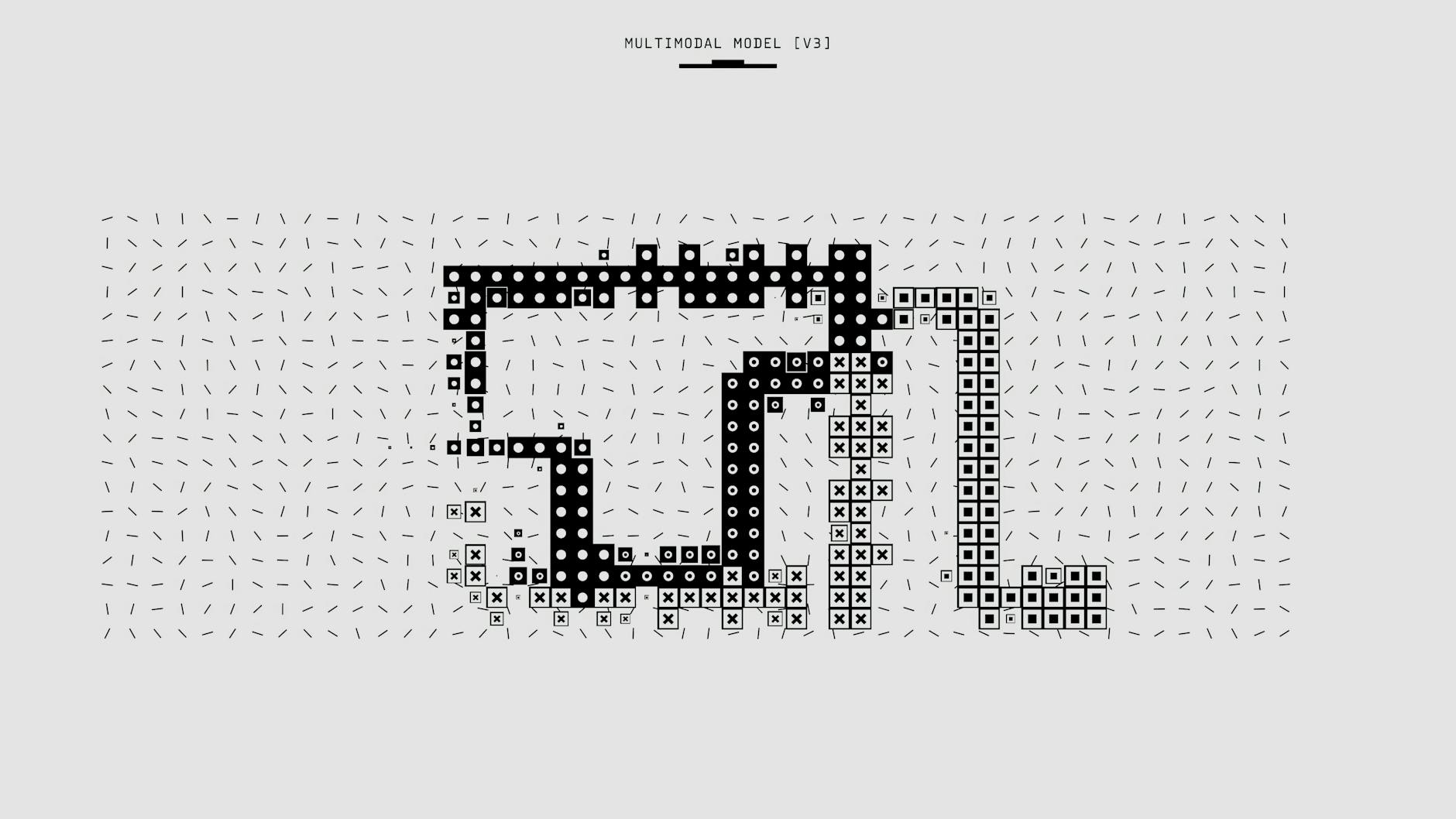

bytedance/dolphin: Install, Run, and Inference Guide

Explicit Environment Setup and Command-Line Guidance

This section provides explicit guidance for setting up your environment and running commands for the bytedance/dolphin model.

Environment Requirements:

- OS & Python: Ubuntu 22.04 LTS or Windows 11 (WSL2); Python 3.11.x x64 with a supported C/C++ toolchain (gcc/clang).

- GPU hardware: NVIDIA GPUs with ≥16 GB VRAM; recommended RTX 3090/4090 or A100/A800; NVIDIA driver 525+ and CUDA toolkit 11.8+.

Installation Steps:

- Virtual environment: Create and activate a virtual environment:

python -m venv venv # Linux/macos: source venv/bin/activate # Windows: venv\Scripts\activate

- Core libraries: install PyTorch with CUDA support:

pip install --upgrade pip pip install torch==2.1.0+cu118 torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu118

- Repository setup: Clone the repository and install dependencies:

git clone https://github.com/bytedance/dolphin.git cd dolphin git status # Confirm branch is main or release pip install -r requirements.txt

Checkpoint Access:

Checkpoints are hosted on official hubs like HuggingFace. For example: https://huggingface.co/bytedance/dolphin-base-3b. If access is restricted, log in using `huggingface-cli login`. For large files, use Git LFS or the HF CLI to pull.

Environment Hints:

- Set `DOLPHIN_CACHE=./cache` to isolate downloads.

- Ensure you have write permissions to the cache and working directories.

Demo Run and Expected Outputs

To run the demo:

python demo/run_demo.py --model dolphin-base-3b --input ./samples/doc1.png --output ./outputs/doc1.json --device cuda:0

If using CPU, add `–device cpu` and expect longer runtimes.

Expected outputs: A structured JSON describing per-page content, including text blocks, tables, and bounding boxes. Dolphin’s analyze-then-parse approach yields structured results.

Troubleshooting Cues

- If `pip` wheels fail, upgrade `pip` and retry.

- If you encounter Out-Of-Memory (OOM) errors on startup, reduce image resolution or use a smaller checkpoint (e.g., `dolphin-base-1b`).

- Ensure CUDA and cuDNN versions match your PyTorch wheels.

E-E-A-T Data Integration

The Dolphin release aligns with the ACL 2025 paper “Dolphin: Document Image Parsing via Heterogeneous Anchor Prompting”. ByteDance notes spikes in scraping activity in the last six weeks, underscoring public usage and relevance.

Related Video Guide

End-to-End Inference Demo: From PDF/Page Images to Structured JSON

End-to-End Inference: Prepare Input, Run Model, Post-Process

Turning a document into structured data your app can act on is more about a smooth pipeline than a single clever trick. Here’s a concise, practical guide to go from a PDF to a clean JSON that’s ready for downstream use.

Step 1 — Input Preparation

For PDFs, convert to images using `pdf2image`. Ensure DPI is around 300 for legibility. Example:

import pdf2image

images = pdf2image.convert_from_path('input.pdf', dpi=300, fmt='png')

Step 2 — Load Model

Load the model from a local checkpoint:

from dolphin.model import DolphinModel

model = DolphinModel.from_pretrained('./checkpoints/dolphin-base-3b')

Step 3 — Inference Loop

Run the model on each image:

results = [model.infer(img) for img in images]

# Each result contains structured fields like 'text', 'tables', 'entities', and 'positions'

Step 4 — Post-processing

Aggregate per-page results into a single JSON object and save.

{

"pages": [

{

"page_num": 1,

"blocks": [...],

"tables": [...],

"text_blocks": [...],

"entities": [...]

},

...

]

}

Step 5 — Sample Python Snippet

A complete snippet for converting a PDF to structured JSON:

import json

from dolphin.model import DolphinModel

from dolphin.utils import pdf_to_images

model = DolphinModel.from_pretrained('./checkpoints/dolphin-base-3b')

images = pdf_to_images('input.pdf', dpi=300)

pages = [model.infer(img) for img in images]

with open('output.json', 'w') as f:

json.dump({'pages': pages}, f, indent=2)

Note: Dolphin uses an analyze-then-parse approach to extract structured content from documents, which helps produce reliable tables and labeled fields rather than plain text blobs.

Optional: For large documents, process in batches and stream results to disk to avoid high memory usage. Validate parsed tables by confirming header rows and consistent column alignment across pages, especially for complex documents.

Checkpoint Access, Model Formats, and Download Paths

This section compares Dolphin’s checkpoint handling with its competitors.

| Aspect | Dolphin | Competitors |

|---|---|---|

| Model formats and paths | Checkpoints typically available in HuggingFace-format files (e.g., `dolphin-base-3b`, `dolphin-large-7b`). Prefer the HuggingFace hub for straightforward loading with `DolphinModel.from_pretrained`. | Often offer multiple formats and packaging; loading can be less straightforward; may rely on non-HuggingFace sources or require extra steps to consolidate formats. |

| Direct download clarity | Official releases provide explicit, stable URLs on HuggingFace and GitHub. Ambiguous or multi-step download paths are avoided. | Downloads may be ambiguous, non-stable, or multi-step, with less clear versioning and origin, complicating reproducibility. |

| Access methods | Use `huggingface-cli login` for private models. For large assets, HF CLI or Git LFS is recommended. Verify checksums after download when available. | Access methods vary; some rely on plain web downloads or different auth flows; partial downloads risk and checksum verification not consistently provided. |

| Format conversion guidance | If TorchScript or ONNX is needed for performance, explicit commands for conversion (e.g., `torch.jit.script`, `onnx export`) with documented arguments and expected file names are provided. | Conversion guidance is often sparse; fewer explicit commands and documented steps; may require ad-hoc or community tools. |

| Documentation structure | Organized into explicit sections: environment, model weights, demo usage, and sample code. | Often a single long page with minimal navigation, making it harder to locate specific topics. |

Hardware Requirements, Performance, and Practical Notes

- Clear hardware guidance: Explicit VRAM recommendations (16–24 GB per model size) and CUDA compatibility notes assist users in selecting a feasible setup.

- Concrete performance expectations: Guidance for common workflows (document parsing with tables, structured extraction) and advice on batch sizes to avoid OOM errors are included.

- Analyze-then-parse capability: Dolphin’s core feature yields structured outputs (tables, entities) rather than free-form text, improving downstream usability for analytics.

- Memory considerations: For very large models or high-resolution inputs, GPU memory demands can be substantial. Plan for multi-GPU or offload strategies if needed.

- Inference speed: Highly dependent on hardware. Users without modern GPUs may face slower runtimes and might need smaller checkpoints or quantized variants.

- Post-processing: Some users may require additional tooling to transform extracted structures into downstream formats (CSV/JSON/Parquet). The guide suggests basic post-processing scripts but assumes familiarity with Python data handling.

Leave a Reply