DGTN Explained: Graph-Enhanced Transformer with Diffusive Attention Gating for Enzyme DDG Prediction

This article delves into DGTN, a novel approach for predicting changes in protein stability (ΔΔG) upon amino acid substitutions in enzymes. By leveraging graph neural networks and transformer architectures with a unique diffusive attention gating mechanism, DGTN aims to capture complex protein interactions more effectively than traditional methods.

What is DGTN and Why it Matters for Enzyme ΔΔG Prediction

DGTN stands for Graph-Enhanced Transformer with Diffusive Attention Gating. It is specifically designed for predicting the change in Gibbs free energy (ΔΔG) resulting from amino acid substitutions in enzymes. Accurate ΔΔG prediction is crucial for understanding enzyme stability and function, especially in high-throughput mutational studies where large datasets can be used for training and validation.

Key aspects of DGTN include:

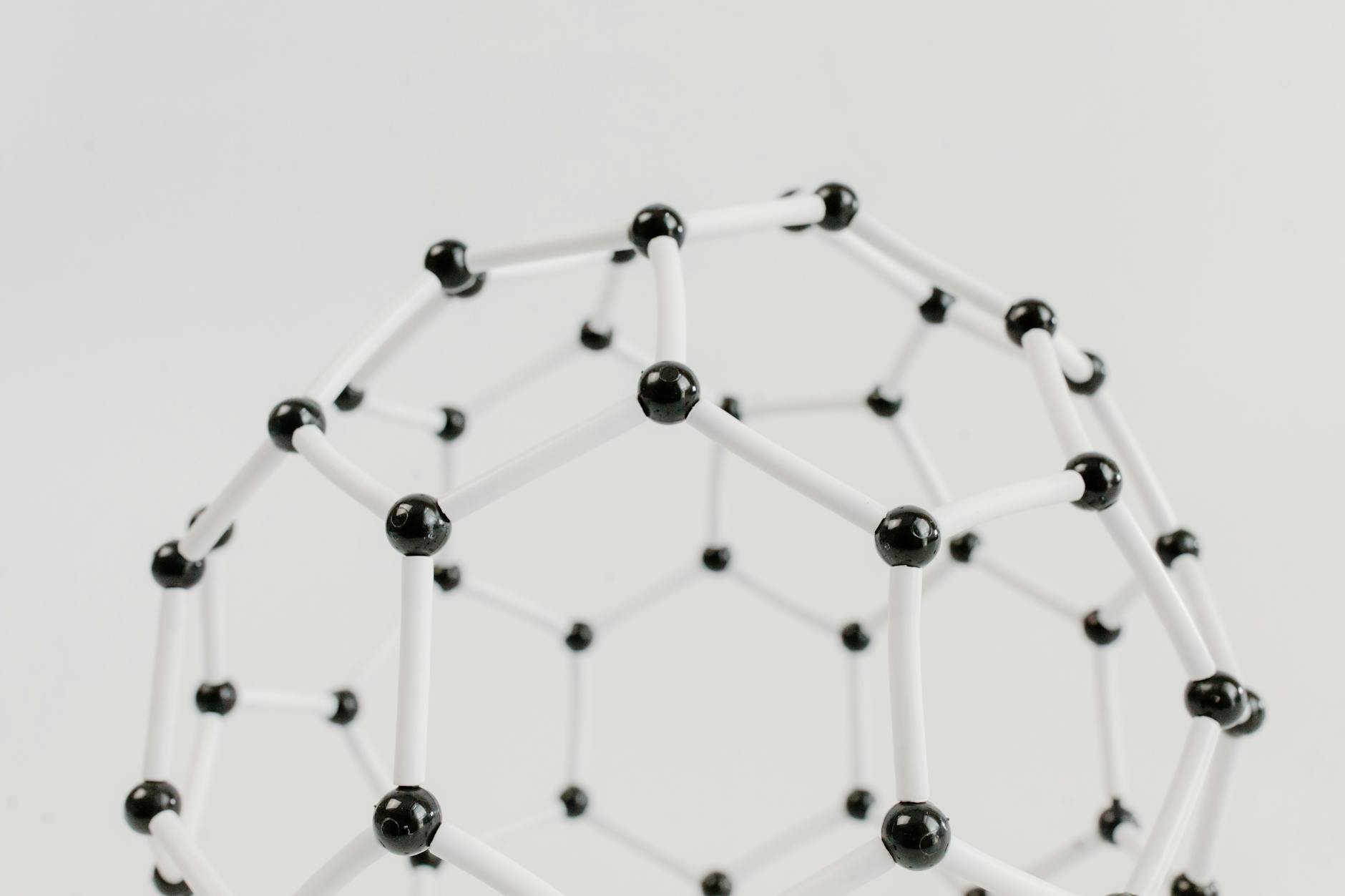

- Graph Representation: Protein residues are modeled as nodes, with edges representing covalent bonds and spatial proximity. Node features encompass residue type, conservation, solvent exposure, and structural context.

- Diffusive Attention Gating (DAG): This mechanism allows attention to diffuse across the graph, effectively capturing long-range interactions while simultaneously reducing noise and focusing on plausible signal paths.

- 3D Context and Allostery: DGTN models the intricate 3D structure of proteins and can capture allosteric effects (interactions at distant sites).

- Performance: It has demonstrated superior performance over sequence-only baselines on benchmarks like DeepDDG, indicating its effectiveness in predicting enzyme stability and interaction changes.

Graph-Enhanced Transformer Architecture: Components and Data Flow

Graph Construction and Node/Edge Features

In this approach, a protein is treated as a graph where each amino acid residue is a node. Edges connect these nodes, capturing both the protein chain’s covalent connections and spatial contacts between residues. This structure facilitates a powerful, bidirectional flow of information, enabling learning from both the protein’s structure and its sequence.

Nodes (Residues)

An enzyme with N residues is represented by N nodes. Each node is associated with a rich feature vector that typically includes:

- One-hot residue type: A 20-dimensional vector indicating the amino acid identity.

- Sequence position embedding: Represents the residue’s position within the primary sequence.

- Conservation score: Derived from multiple sequence alignments, indicating evolutionary importance.

- Solvent accessibility (RSA): A measure of how exposed the residue is to the solvent.

- Secondary structure label: Categorical information (e.g., helix, strand, coil).

- Backbone torsion context: Information about torsion angles (phi/psi) reflecting local geometry.

Edges

Edges connect residue pairs and can be of two main types:

- Covalent edges: Connect sequential neighbors along the peptide chain (residues i and i+1).

- Spatial proximity edges: Connect residue pairs that are close in 3D space, typically within a defined distance cutoff (e.g., 8 Å between Cα atoms). A variant uses k-Nearest Neighbors (kNN) to connect each node to its 12 nearest spatial neighbors, creating a dense local graph view.

Edge Features

Each edge carries features that describe the interaction:

- Distance dij: The Euclidean distance between the residues (often Cα atoms).

- Relative orientation cues: Information about how residue frames are oriented relative to each other.

- Edge_type flags: Distinguishes between covalent and spatial edges.

Graph Directionality

Graphs are treated as undirected to allow bidirectional message passing, ensuring information can flow freely between connected residues.

In essence, nodes capture residue identity and local context, while edges encode chain continuity and spatial relationships. This forms a rich, biologically meaningful scaffold for learning from enzyme structures.

Transformer Core and Graph Attention Integration

The model integrates a transformer core with graph attention mechanisms to process protein information. Think of a protein as a dynamic graph where residues interact, structural context influences these interactions, and information propagates to capture long-range effects.

Graph-Attention Stack

A stack of graph-attention layers processes node embeddings, incorporating structural biases from the adjacency matrix. Multiple attention heads are used to capture diverse interaction patterns among residues.

Positional Encoding

Sequence position is combined with local structural context (secondary structure, RSA) to preserve order information within the graph. This helps differentiate between residues close in sequence versus those close in 3D space.

Diffusive Attention Gating (DAG)

The core innovation, DAG, involves computing a gate Gijt at each diffusion step t: Gijt = sigmoid(Wgate[hit, hjt, dij, si, sj, edge_type]). Attention weights are then scaled by this gate before aggregation. This allows edges to selectively pass information based on the diffusion step and context.

Graph Diffusion

Diffusion occurs over D steps (e.g., 3–5), propagating information across the graph to model long-range coupling and mitigate over-smoothing, where node features become indistinguishable.

Final Representation and Prediction

A final protein representation is obtained via an attention-weighted pooling over residues. This representation is then fed into a regression head to predict ΔΔG in kcal/mol. The architecture is designed to be transparent and modular, blending sequence, structure, and long-range interactions.

The Diffusive Attention Gating Mechanism

Attention in protein models must balance local chemical details with distant allosteric signals. DAG achieves this by linking attention to how signals diffuse from neighboring residues. Essentially, a distal interaction receives attention only if a plausible diffusion path exists from its local neighborhood. This mechanism:

- Reduces unlikely distal interactions: Unless diffusion pathways support them, distant interactions are less likely to be considered.

- Improves robustness to noise: Spurious, far-off structural signals are suppressed unless diffusion from nearby residues provides corroboration.

- Adapts across layers and diffusion steps: Early layers focus on local chemistry with tighter gates. As diffusion progresses and layers deepen, gates relax, allowing more distant cues. This progressive reveal captures allosteric effects.

- Acts as a learned filter: It prunes unreliable correlations while preserving meaningful long-range dependencies, learned through training.

How Gate Behavior Evolves

The gating behavior adapts based on the layer and diffusion step:

| Aspect | Early layers / Low diffusion | Middle layers / Moderate diffusion | Late layers / High diffusion |

|---|---|---|---|

| Attention focus | Local chemistry | Broader cues | Long-range, allosteric signals |

| Gate tendency | Restricts distant signals | Balances local and distant signals | Allows distant interactions when diffusion supports them |

Training Data Pipeline and Loss Function for DDG Prediction

Data Sources and Preprocessing

The training data for DGTN is compiled from curated ΔΔG records for enzymes, integrating data from ProTherm and DeepDDG-style benchmarks. Each record includes mutation details and experimental context.

- Mutation details: Type, position, wild-type and mutant amino acids, measured ΔΔG, and experimental conditions.

- Data sources: Enzyme mutations from ProTherm-derived compilations and DeepDDG benchmarks.

- Standardization: ΔΔG values are standardized to kcal/mol. Ambiguous or conflicting measurements are filtered.

To align mutation data with protein structures, PDB files are matched and preprocessed for graph construction. If experimental annotations (like secondary structure or solvent accessibility) are missing, these features are predicted to ensure informative graph representations.

Hyperparameters and Resource Constraints

The practical setup for DGTN balances accuracy with training efficiency:

- Graph layers: 4–6 graph-attention layers with a hidden dimension of 128–256 and 4 attention heads per layer. Diffusion steps D = 3–5. Dropout rate of 0.1–0.2. Weight decay around 1e-4.

- Optimizer and training: Adam optimizer with a learning rate of 1e-4. Early stopping based on validation RMSE. Batch size between 8 and 32.

- Hardware: A single modern GPU (e.g., NVIDIA A100 or RTX 3090) is often sufficient for mid-sized enzymes. Data parallelism can be used for larger tasks.

Evaluation Metrics and Baselines

Evaluation Metrics

The model’s performance is assessed using standard metrics:

- Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE): Measure prediction accuracy in kcal/mol.

- Pearson correlation: Assesses the model’s ability to preserve the ranking and linear relationship between predicted and experimental ΔΔG values.

Evaluations are performed across various data splits, including per-mutation, per-enzyme, and cross-family tests, to evaluate fine-grained accuracy and generalization capabilities to unseen protein families.

Baselines and Ablations

DGTN is compared against:

- DeepDDG-style neural nets: A strong, common baseline for ΔΔG prediction.

- Sequence-based predictors: To isolate the impact of structural information.

- Graph models without diffusion gating: To highlight the contribution of the DAG component.

Ablation studies quantify the impact of specific components like different edge types (covalent vs. spatial) and the diffusion gating mechanism itself.

Practical Implementation, Training, Evaluation, and Experiments

Training Protocol and Loss Function

The task is framed as a regression problem to predict ΔΔG. The primary loss function is Mean Squared Error (MSE) with L2 regularization. Huber loss can be used for increased robustness to outliers.

- Data splits: Stratified sampling across enzymes to maintain diversity, followed by cross-validation for generalization assessment.

- Model selection: Candidate models are compared using validation RMSE and Pearson correlation. The best configuration is retrained on the full training set and evaluated on a held-out test set.

Data Handling and Reproducibility

Reproducibility is ensured through:

- Fixing random seeds: Across Python, NumPy, and PyTorch for deterministic results.

- Saving model states: Regular snapshots of model weights and optimizer state for training resumption and ablation comparisons.

- Centralized logging: Using formats like JSON Lines to track hyperparameters and metrics.

Companion Data Preprocessor

A preprocessor is used to:

- Map mutations to graph indices.

- Compute per-node and per-graph features.

- Export graph data in standard formats (e.g., DGL, PyTorch Geometric).

Code Snippet: Graph Construction

A simplified Python example using PyTorch Geometric demonstrates how to build a residue-level graph from a PDB file and map mutations to node indices. This includes placeholder functions for PDB parsing and actual graph building logic.

from typing import List, Tuple

import torch

from torch_geometric.data import Data

import re

# Placeholder: replace with real PDB parsing to obtain (chain, residue_number, resname)

def parse_pdb_residues(pdb_path: str) -> List[Tuple[str, int, str]]:

"""

Return a list of residues as (chain, residue_number, resname), in chain order.

This is a stub; integrate a proper parser for production use.

"""

# Example data for a short protein segment

return [

('A', 1, 'ALA'), ('A', 2, 'GLY'), ('A', 3, 'LYS'), ('A', 4, 'SER'),

('B', 1, 'MET'), ('B', 2, 'THR'),

]

def build_graph_from_pdb(pdb_path: str, mutations: List[str]) -> Data:

"""

Build a simple residue-level graph:

- Nodes: one per residue (ordered by chain and residue number)

- Edges: sequential connectivity within each chain

- Node features: one-hot encoding over 20 standard amino acids (simplified)

- y (optional): indices of mutated residues

"""

residues = parse_pdb_residues(pdb_path)

N = len(residues)

# Standard amino acid types and mapping

aa_types = [

'ALA','ARG','ASN','ASP','CYS','GLN','GLU','GLY','HIS','ILE',

'LEU','LYS','MET','PHE','PRO','SER','THR','TRP','TYR','VAL'

]

aa_to_idx = {aa: i for i, aa in enumerate(aa_types)}

x = torch.zeros((N, len(aa_types)), dtype=torch.float)

for i, (_chain, _resnum, resname) in enumerate(residues):

if resname in aa_to_idx:

x[i, aa_to_idx[resname]] = 1.0

# Edges: chain-wise sequential connectivity

edge_index = []

current_chain = None

last_idx = None

for i, (chain, _resnum, _resname) in enumerate(residues):

if chain != current_chain:

current_chain = chain

last_idx = None

if last_idx is not None:

edge_index.append([last_idx, i])

edge_index.append([i, last_idx])

last_idx = i

edge_index = torch.tensor(edge_index, dtype=torch.long).t().contiguous()

data = Data(x=x, edge_index=edge_index)

# Map mutations to node indices (example format: "A123T" => chain A, residue 123)

mut_indices = []

for mut in mutations:

# Basic regex for mutation string (e.g., A123T)

m = re.match(r'^([A-Z])(\d+)([A-Z])$', mut)

if not m:

continue

chain = m.group(1)

pos = int(m.group(2))

# Find the node with matching chain and residue number

for idx, (c, rnum, _resname) in enumerate(residues):

if c == chain and rnum == pos:

mut_indices.append(idx)

break

if mut_indices:

data.y = torch.tensor(mut_indices, dtype=torch.long)

else:

data.y = torch.empty((0,), dtype=torch.long)

return data

# Example usage:

# pdb_path = "path/to/protein.pdb"

# mutations = ["A3T", "B1G"] # Adjusted for stub data

# graph = build_graph_from_pdb(pdb_path, mutations)

# print(graph)

Comparison with Existing Methods

DGTN distinguishes itself from standard sequence-only approaches by employing a graph-based representation and a diffusion gating mechanism. This allows it to process both local structural details and long-range dependencies in a single pass, a challenge for purely sequence-based predictors.

DGTN vs. Traditional Sequence-Based Predictors

| Aspect | DGTN | Traditional sequence-based predictors |

|---|---|---|

| Input representation | Graph-structured input connecting residues based on structural or functional relationships. | Linear sequence of amino acids processed sequentially. |

| Context modeling | Diffusive attention gating propagates information locally and across long-range couplings. | Primarily local context within fixed windows; long-range dependencies are difficult to model. |

| Diffusion gating | Explicit mechanism controlling information spread across the graph. | No explicit diffusion mechanism; standard attention or convolution methods are used. |

| Robustness to noise | Diffusion gating enhances robustness by smoothing noisy signals while preserving important ones. | Performance can degrade with noisy inputs; limited explicit noise handling. |

| Generalization across enzyme families | Graph structure and diffusion gating facilitate generalization by enabling controlled information sharing. | Generalization often requires retraining or extensive fine-tuning for different families. |

Takeaway: DGTN’s combination of graph-structured inputs and diffusive attention gating allows it to effectively model local contexts and long-range couplings, enhancing robustness to noise and generalization across enzyme families.

Comparison Table: DGTN vs. Baselines and Alternatives

| Model | Input | Architecture | Pros | Cons | Evaluation & Resource Notes |

|---|---|---|---|---|---|

| DeepDDG | Mutation list + sequence-based features | Feed-forward network mapping features to ΔΔG | Strong baseline, fast | Limited modeling of long-range structural coupling | RMSE, MAE, Pearson r on enzyme ΔΔG datasets; training time and memory considerations. |

| Graph-only GNN baseline | Graph with local edges | Graph neural network without diffusion gating | Good at local interactions | Misses long-range allostery; gating absent | RMSE, MAE, Pearson r on enzyme ΔΔG datasets; training time and memory considerations. |

| DGTN | Graph with residues and structural features | Graph-attention transformer + diffusion gating | Captures local and long-range coupling; gating reduces noise; robust to structural variation | Requires reasonably accurate 3D structures; higher computational cost than sequence-based models; performance depends on hyperparameter tuning and data quality. | RMSE, MAE, Pearson r on enzyme ΔΔG datasets; training time and memory considerations. |

Pros and Cons of the DGTN Approach

- Pros: Explicitly models 3D context and allostery; diffusive gating improves discovery of long-range couplings; scalable to diverse enzyme sizes; adaptable across mutation types.

- Cons: Requires reasonably accurate 3D structures; higher computational cost than purely sequence-based models; performance depends on careful hyperparameter tuning and data quality.

Leave a Reply